LLMWise vs Postproxy

Side-by-side comparison to help you choose the right product.

Unify your team's AI tools with one smart API that automatically picks the best model for every task.

Last updated: February 28, 2026

Postproxy

Postproxy simplifies social media publishing by unifying multiple platforms into one reliable API for seamless.

Last updated: February 28, 2026

Visual Comparison

LLMWise

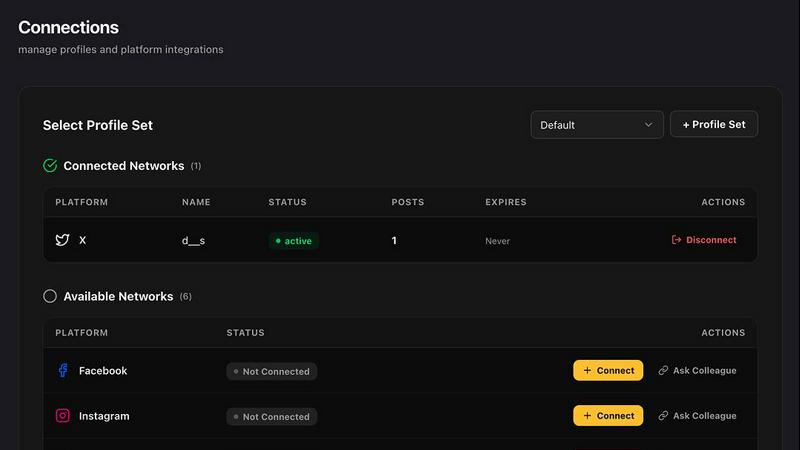

Postproxy

Feature Comparison

LLMWise

Intelligent Model Routing

LLMWise's smart routing acts as your AI conductor, analyzing each prompt and automatically directing it to the most suitable model from its vast catalog. This means code generation tasks are sent to the best coding model, creative briefs to the most eloquent writer, and analytical questions to the most logical reasoner. This feature removes the guesswork and manual switching between different provider dashboards, allowing your team to focus on building great products instead of managing AI infrastructure.

Compare, Blend, and Judge Modes

This suite of orchestration tools empowers teams to harness the collective intelligence of multiple models. Compare mode runs a single prompt across several models simultaneously, presenting their answers side-by-side with metrics on speed, cost, and length for easy evaluation. Blend mode takes this further by synthesizing the best parts of each model's output into one superior, cohesive answer. Judge mode enables models to critique and evaluate each other's responses, providing an automated layer of quality assurance.

Resilient Circuit-Breaker Failover

LLMWise ensures your application's AI capabilities never break. It includes an intelligent circuit-breaker system that monitors all connected providers in real-time. If a primary model or provider experiences high latency or an outage, traffic is instantly and automatically rerouted to a predefined backup model. This built-in redundancy guarantees high availability and reliability for production applications, giving your team and your users uninterrupted service.

Advanced Testing & Optimization Suite

Teams can systematically improve their AI implementations with LLMWise's built-in testing tools. Create benchmark suites and run batch tests across models to measure performance on your specific prompts. Set optimization policies that automatically prioritize speed, cost, or accuracy for different types of requests. Automated regression checks help ensure that updates to models or prompts don't degrade the quality of your outputs, fostering a culture of continuous improvement and stable deployments.

Postproxy

Unified API for Multiple Platforms

Postproxy provides a single API that replaces the need for individual integrations with various social media platforms. This unification streamlines the publishing process, allowing users to send requests to multiple networks with ease.

Automated Content Adaptation

The platform automatically transforms content to meet the unique requirements of each social media platform. This feature ensures that users do not have to manually adjust their posts for different formats, media limits, or validation rules, saving significant time and effort.

Robust Error Handling

Postproxy is designed to handle operational failures gracefully. It integrates automatic retries, rate limit management, and quota awareness, ensuring that users face fewer disruptions in their publishing workflows, thus enhancing reliability.

Explicit Publish States

With Postproxy, users can easily track the status of their publishing requests. The system provides clear feedback on what has succeeded, what has failed, and the reasons behind any failures, eliminating the need for manual checks and interventions.

Use Cases

LLMWise

Development and Prototyping

Developers can rapidly prototype AI features using the 30 permanently free models available at zero cost. This allows teams to experiment with different model capabilities, test prompt effectiveness, and build proof-of-concepts without any financial commitment. The compare mode is invaluable for debugging prompt issues by instantly seeing how different models interpret the same instruction, saving hours of trial and error.

Production Application Resilience

For teams running customer-facing AI applications, LLMWise's failover routing is critical. It ensures that if a primary AI service like GPT-4 has an outage, user requests are seamlessly handled by a backup model like Claude or Gemini, preventing downtime and maintaining a positive user experience. This turns a potential crisis into a minor, automated blip that your operations team doesn't need to manually manage.

Cost-Optimized AI Operations

Companies with existing API credits from major providers can use LLMWise's BYOK (Bring Your Own Keys) feature to plug in their keys and immediately benefit from smart routing and failover without changing their billing setup. This synergy between existing investments and new orchestration capabilities can lead to significant cost reductions, often over 40%, by ensuring the most cost-effective model is used for each task.

Content Creation and Evaluation

Marketing and content teams can use the blend and judge modes to produce higher-quality drafts. A single request can generate variations from multiple creative models, then synthesize the strongest elements into a final piece. Judge mode can then provide automated feedback on tone, clarity, and alignment with brand guidelines, creating a collaborative workflow between human creativity and AI assistance.

Postproxy

Streamlined Social Media Management

Businesses can use Postproxy to manage their social media presence across multiple platforms from a single interface. This capability reduces the complexity of handling various accounts and simplifies the workflow for social media teams.

Automated Content Publishing

Marketing teams can automate the posting of AI-generated content. By integrating Postproxy into their existing pipelines, they can ensure timely and consistent publishing without the need for manual oversight.

Simplified Account Management

Postproxy allows organizations with numerous social media accounts to manage permissions and access effectively. This feature is crucial for companies with multiple brands or regional accounts, streamlining account control.

Scalable Publishing Solutions

As businesses grow, their publishing needs can become more complex. Postproxy facilitates scaling operations by allowing users to publish large volumes of content without adding complexity to their processes, making it ideal for high-demand environments.

Overview

About LLMWise

LLMWise is the ultimate orchestration platform for developers and teams building with large language models. It eliminates the complexity of managing multiple AI providers by offering a single, unified API to access over 62 models from 20 leading providers, including OpenAI, Anthropic, Google, Meta, xAI, and DeepSeek. The core value proposition is intelligent, task-based routing: you send a prompt, and LLMWise automatically selects the optimal model for the job, whether it's coding with GPT, creative writing with Claude, or translation with Gemini. This collaborative approach ensures you always get the best possible output without vendor lock-in.

Built for developers who demand performance and reliability, LLMWise goes beyond simple routing with powerful orchestration modes like side-by-side comparison, output blending, and model-judged evaluations. It ensures your applications are always resilient with automatic failover routing if a provider experiences downtime. With a flexible, credit-based pricing model and the option to bring your own API keys (BYOK), teams can significantly reduce costs while gaining unparalleled flexibility. Start with 20 free credits and access 30 permanently free models to prototype, test, and build with zero commitment.

About Postproxy

Postproxy is an innovative solution designed to simplify and unify social media publishing across multiple platforms, such as Instagram, Facebook, YouTube, LinkedIn, and X. It provides a single API endpoint that automates the challenges associated with platform-specific publishing, including OAuth management, rate limiting, retries, content adaptation, and analytics. This product is particularly beneficial for developers and automation enthusiasts using tools like n8n, Zapier, and CI/CD pipelines. By transforming the publishing process into a predictable infrastructure, Postproxy allows users to generate AI-driven content, route it according to specific rules, and publish at scale without the burden of manual adjustments. With a free plan available, it accommodates businesses of all sizes, making it easier to scale as their needs grow. Additionally, the Managing Control Panel (MCP) offers a user-friendly interface to manage publishing activities seamlessly.

Frequently Asked Questions

LLMWise FAQ

How does the pricing work?

LLMWise uses a simple, pay-as-you-go credit system with no monthly subscriptions. You start with 20 free trial credits that never expire. After that, you purchase credit packs. You are only charged credits when you use a paid model; the 30 free models always cost 0 credits. You also have the option to use your own existing API keys from providers (BYOK), in which case you pay the provider directly at their rates and only use LLMWise credits for the orchestration features.

What are the free models for?

The 30+ free models serve multiple strategic purposes. They are perfect for initial prototyping and development, allowing you to build and test without cost. They act as a smart fallback layer for non-critical traffic or during retries if paid models fail. They are also essential for benchmarking, enabling you to compare the quality and performance of free versus paid models on your specific tasks before deciding where to route production traffic.

How quickly can I integrate LLMWise?

You can be up and running in under two minutes. The process involves signing up for an account to receive your free credits, generating a single API key from your dashboard, and then making your first request using the provided Python/TypeScript SDKs or cURL examples. This unified API approach means you replace multiple provider-specific integrations with one simple connection.

What happens if a model provider is down?

LLMWise's circuit-breaker failover system handles this automatically. The platform continuously monitors the health and latency of all connected model providers. If a primary model becomes unavailable or too slow, the system instantly reroutes your application's requests to a pre-configured backup model from a different provider. This ensures your application's AI features remain operational without any manual intervention required from your team.

Postproxy FAQ

What social media platforms does Postproxy support?

Postproxy currently supports major platforms including Instagram, Facebook, YouTube, LinkedIn, and X, allowing users to publish content across multiple channels effortlessly.

How does Postproxy handle platform-specific failures?

Postproxy is designed to manage operational failures by implementing automatic retries, rate limit handling, and clear reporting on publish states. This ensures a reliable publishing experience.

Is there a free plan available for Postproxy?

Yes, Postproxy offers a free plan, allowing users to get started without any financial commitment. This plan is ideal for individuals and small businesses looking to explore the platform's capabilities.

How can I integrate Postproxy into my existing workflows?

Integrating Postproxy is straightforward. Users can create an account, obtain an API key, connect their social media accounts, and start publishing content via the unified API, making it easy to include in existing automation setups.

Alternatives

LLMWise Alternatives

LLMWise is a unified API platform in the AI assistants category, designed to give developers a single access point to leading large language models like GPT, Claude, and Gemini. Its core innovation is intelligent auto-routing, which automatically selects the best-suited model for each specific prompt to optimize performance. Users often explore alternatives for various reasons, such as different pricing structures, the need for specific platform integrations, or a desire for a different set of management and testing features. Some teams may prioritize a different balance between cost, control, and convenience. When evaluating other solutions, it's wise to consider your team's primary needs. Key factors include the flexibility of the API, the depth of analytics and testing tools, the robustness of failover systems, and the overall pricing model. The goal is to find a tool that enhances your team's collaborative workflow without adding unnecessary complexity.

Postproxy Alternatives

Postproxy is a powerful tool designed for developers and automators, providing a unified API for seamless publishing across multiple social media platforms, including Instagram, Facebook, YouTube, LinkedIn, and X. By streamlining the complexities of social media management, Postproxy facilitates a more efficient publishing process, making it easier for users to manage their social media presence from a single endpoint. Users often seek alternatives to Postproxy for various reasons, such as exploring different pricing models, evaluating feature sets, or meeting specific platform integration requirements. When choosing an alternative, it’s essential to consider factors such as ease of use, scalability, integration capabilities, and the level of automation offered. Identifying the core needs of your publishing strategy will help ensure that the chosen solution aligns with your goals.