Agenta vs Mechasm.ai

Side-by-side comparison to help you choose the right product.

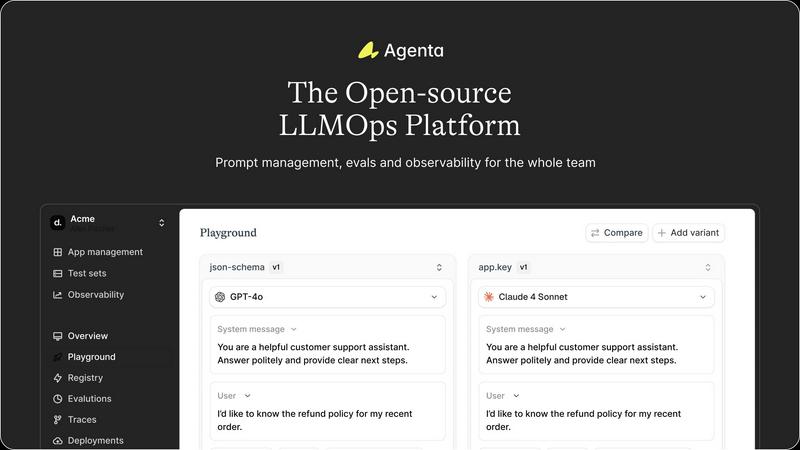

Agenta is the open-source platform that unites teams to collaboratively build and manage reliable LLM applications.

Last updated: March 1, 2026

Mechasm.ai empowers teams to effortlessly create self-healing tests in plain English, ensuring reliable and faster.

Last updated: February 28, 2026

Visual Comparison

Agenta

Mechasm.ai

Feature Comparison

Agenta

Centralized Prompt Management

Agenta allows teams to centralize their prompts, evaluations, and traces in one platform, eliminating the confusion of scattered information across various tools. This feature ensures that all team members have access to the same data, facilitating collaboration and reducing the risk of miscommunication.

Unified Playground

The unified playground enables teams to experiment with different prompts and models side-by-side. This feature supports a complete version history of prompts, allowing teams to track changes effectively and revert if necessary. It also ensures model agnosticism, permitting teams to utilize the best models from any provider without being locked into a single vendor.

Automated Evaluation Framework

Agenta replaces guesswork with systematic, evidence-based evaluation processes. Teams can create a structured methodology to run experiments, track results, and validate every change made to the models. This framework integrates seamlessly with any evaluator, whether it is a built-in evaluator or a custom solution.

Comprehensive Observability Tools

With advanced observability tools, Agenta allows teams to debug AI systems efficiently and gather user feedback in real time. Users can trace every request to find failure points, annotate traces collaboratively, and turn any trace into a test with a single click, thereby closing the feedback loop and enhancing the overall performance of AI applications.

Mechasm.ai

Self-Healing Tests

Mechasm.ai features self-healing tests that automatically adapt to changes in the user interface (UI). When UI elements change, the AI identifies the alterations and updates the selectors without manual input, reducing maintenance efforts by up to 90%. This ensures that tests remain relevant and functional despite ongoing development.

Natural Language Testing

With Mechasm.ai, writing tests becomes as simple as typing in plain English. Users can describe their testing scenarios in everyday language, and the AI translates these descriptions into robust automation code. This feature democratizes testing by allowing non-technical team members to contribute meaningfully to quality assurance.

Cloud Parallelization

The platform supports cloud parallelization, enabling teams to scale their testing efforts effortlessly. This feature allows users to run hundreds of tests simultaneously in a secure cloud environment, significantly speeding up the QA process and facilitating faster deployments. The infrastructure is designed to handle extensive testing without any setup required.

Comprehensive Analytics

Mechasm.ai includes actionable analytics that provide insights into test performance and team health. Users can access health scores, trend analysis, and performance tracking, allowing them to monitor the effectiveness of their testing strategies and make data-driven decisions to enhance their QA processes.

Use Cases

Agenta

Collaborative Prompt Development

Agenta is ideal for teams looking to collaborate on prompt development. By allowing product managers, developers, and domain experts to work together in a single environment, teams can iterate and experiment with prompts efficiently, leading to better model performance.

Systematic Experimentation

Teams can utilize Agenta to create a systematic experimentation process. This use case is particularly beneficial for organizations that require rigorous testing of model iterations, ensuring that every change is validated and backed by evidence before deployment.

Enhanced Debugging and Feedback Gathering

Agenta's observability features enable teams to debug AI systems effectively. By tracing requests and annotating failures collaboratively, teams can gather valuable feedback from users and domain experts, which can then be integrated into future iterations of the model.

Agile Deployment of AI Applications

With Agenta, organizations can fast-track the deployment of AI applications. The platform's structured workflows and centralized resources help teams move from development to production swiftly, ensuring that they can ship reliable AI products with confidence.

Mechasm.ai

Rapid Feature Testing

Teams can utilize Mechasm.ai to quickly create and execute tests for new features. By describing functionalities in plain English, they can generate tests almost instantly, allowing for rapid iterations and quicker feature releases without compromising on quality.

Collaborating Across Teams

Mechasm.ai fosters collaboration among diverse roles within engineering teams. Product managers, designers, and developers can all contribute to the QA process by writing tests in natural language, ensuring that all perspectives are considered in the testing phase.

Reducing Maintenance Overhead

By implementing self-healing tests, organizations can significantly reduce the time and resources spent on test maintenance. The AI automatically adjusts tests to accommodate UI changes, allowing QA teams to focus on higher-level tasks instead of manual updates.

Integrating with CI/CD Pipelines

Mechasm.ai seamlessly integrates with existing continuous integration and continuous deployment (CI/CD) workflows. This compatibility enables teams to receive immediate feedback on their code changes, enhancing deployment confidence and ensuring that quality assurance remains a priority throughout the development lifecycle.

Overview

About Agenta

Agenta is an innovative, collaborative, open-source LLMOps platform designed to unify AI teams around the shared goal of building and shipping reliable large language model (LLM) applications. It effectively addresses the common challenges that hinder AI development, such as unpredictable model behavior, fragmented workflows, and isolated teams. By creating a centralized, integrated environment, Agenta allows developers, product managers, and subject matter experts to work together seamlessly. This transformation moves chaotic, ad-hoc processes into a structured, evidence-based workflow, resulting in improved efficiency and collaboration. Serving as the single source of truth for LLM development, Agenta centralizes the entire development lifecycle—from initial prompt experimentation and rigorous evaluation to production observability and debugging. Its core value proposition lies in enabling every team member to contribute their expertise safely, compare iterations systematically, and validate changes before they affect end users, ultimately fostering synergy and speeding up the delivery of robust AI products.

About Mechasm.ai

Mechasm.ai is an innovative automated testing platform designed specifically for modern engineering teams that face the challenges of traditional quality assurance (QA) methods. As software development evolves, legacy testing frameworks often impede progress, making it essential for teams to adopt more agile solutions. Mechasm.ai introduces a groundbreaking approach known as Agentic QA, allowing users to write tests in plain English. This user-friendly accessibility empowers not just QA engineers but also developers, product managers, and designers to collaborate effectively in enhancing the quality assurance process. The platform's primary value proposition lies in its ability to generate resilient, self-healing tests that automatically adapt to UI changes without requiring manual intervention. By bridging the gap between human intent and technical execution, Mechasm.ai facilitates faster feature delivery and instills greater confidence in production deployments. This ultimately leads to enhanced team synergy and operational efficiency, ensuring that teams can ship high-quality code without the fear of breaking existing functionalities.

Frequently Asked Questions

Agenta FAQ

What is LLMOps and how does Agenta support it?

LLMOps, or Large Language Model Operations, refers to the practices and tools used to manage the lifecycle of LLM development. Agenta supports LLMOps by providing a collaborative platform that centralizes workflows, facilitates experimentation, and ensures systematic evaluation of model performance.

Can Agenta integrate with existing tools and technologies?

Yes, Agenta is designed to integrate seamlessly with a variety of frameworks and models, including LangChain, LlamaIndex, and OpenAI. This flexibility allows teams to utilize their preferred tools while benefiting from Agenta's robust infrastructure.

Is Agenta suitable for teams of all sizes?

Absolutely. Agenta is built to accommodate teams of all sizes, from small startups to large enterprises. Its collaborative features and centralized tools enhance productivity regardless of the team's scale, making it an excellent choice for any organization involved in AI development.

How does Agenta ensure data security and privacy?

Agenta prioritizes data security and privacy by implementing best practices in software development and data management. The platform is open-source, allowing teams to review the code and ensure compliance with their security requirements. Additionally, Agenta offers features that help teams manage sensitive information responsibly throughout the development lifecycle.

Mechasm.ai FAQ

How does Mechasm.ai ensure test resilience?

Mechasm.ai employs self-healing technology that automatically adjusts to UI changes. When a test fails due to a UI alteration, the AI attempts to fix the selectors and adapt the test, ensuring minimal disruption and maintaining test reliability.

Can non-technical team members write tests in Mechasm.ai?

Absolutely. One of the key features of Mechasm.ai is its natural language testing capability, allowing anyone on the team—regardless of technical expertise—to write tests in plain English, thus promoting collaboration across various roles.

What type of analytics does Mechasm.ai provide?

Mechasm.ai offers comprehensive analytics, including health scores, trend analysis, and performance tracking. These insights help teams monitor their testing effectiveness and make informed decisions to optimize their QA processes.

Is Mechasm.ai compatible with existing CI/CD tools?

Yes, Mechasm.ai integrates seamlessly with popular CI/CD tools like GitHub Actions, GitLab, and Slack. This integration allows teams to incorporate testing into their workflows without additional setup, streamlining the deployment process and enhancing overall efficiency.

Alternatives

Agenta Alternatives

Agenta is an open-source platform designed for collaborative development and management of reliable LLM applications. As teams strive to enhance their AI projects, they often encounter challenges like unpredictable model behavior and disjointed workflows. This prompts users to seek alternatives that might better suit their needs, whether due to pricing structures, feature sets, or specific platform requirements. When evaluating options, it’s essential to consider factors such as ease of collaboration, the flexibility of experimentation, and the robustness of evaluation frameworks to ensure a smooth transition and continued productivity.

Mechasm.ai Alternatives

Mechasm.ai is an advanced automated testing platform designed to empower modern engineering teams through its innovative approach to quality assurance. It belongs to the categories of AI Assistants, No Code & Low Code tools, and Tech Tools, facilitating collaboration among QA engineers, developers, product managers, and designers. Users often seek alternatives to Mechasm.ai for various reasons, including pricing structures, feature sets, or specific platform requirements that better align with their team's needs. When choosing an alternative to Mechasm.ai, it’s essential to consider several factors. Look for platforms that offer natural language authoring capabilities, self-healing tests, and seamless execution environments. Additionally, evaluate how well the alternative can integrate with your existing workflows and whether it fosters collaboration across different team members in the testing process.