Mod vs OpenMark AI

Side-by-side comparison to help you choose the right product.

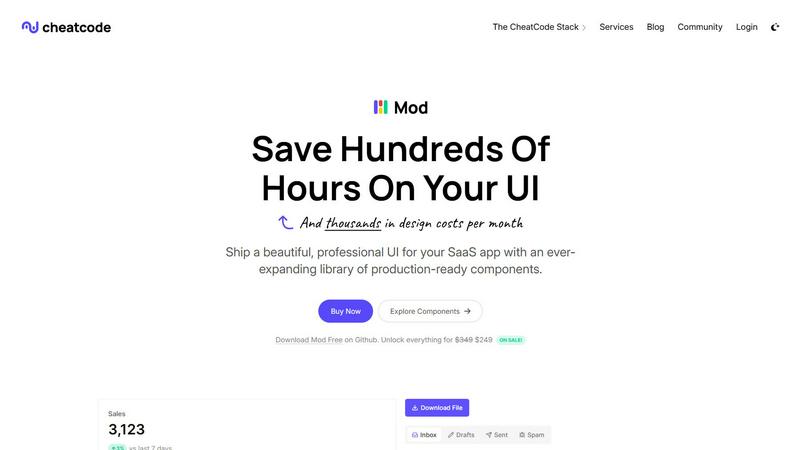

Mod is a collaborative CSS framework that accelerates SaaS app development with a rich library of ready-to-use compon...

OpenMark AI helps your team benchmark over 100 AI models on your specific task to find the best one for cost, speed, and quality.

Last updated: March 26, 2026

Visual Comparison

Mod

OpenMark AI

Feature Comparison

Mod

Comprehensive Component Library

Mod boasts a library of over 88 components that cover a wide range of UI elements, allowing developers to easily assemble their applications with pre-built, customizable options. This extensive library ensures that teams can maintain consistency in design while saving valuable time during development.

Extensive Styling Options

With 168 unique styles, Mod provides developers with the flexibility to tailor the appearance of their applications to meet specific branding guidelines. This extensive range of styling options helps teams create visually appealing interfaces that resonate with users and enhance overall user experience.

Dark Mode Functionality

Mod includes built-in support for dark mode, catering to the growing demand for user preferences in UI design. This feature allows developers to create applications that are not only aesthetically pleasing but also easier on the eyes for users who prefer darker themes, enhancing usability and accessibility.

Responsive and Mobile-First Design

The framework is designed with a mobile-first approach, ensuring that applications built with Mod are responsive and function seamlessly across devices of all sizes. This focus on responsive design helps teams deliver a superior user experience, regardless of how users access the application.

OpenMark AI

Plain Language Task Description

Describe the specific task you need an AI model to perform using simple, natural language—no coding required. Whether it's data extraction, content classification, translation, or building a RAG pipeline, you can define your exact success criteria. The platform then translates this into structured prompts to ensure every model in your benchmark is tested against the same, relevant challenge, fostering a shared understanding across technical and non-technical team members.

Multi-Model Benchmarking in One Session

Run your defined task against a wide selection of models from leading providers like OpenAI, Anthropic, and Google in a single, unified session. This eliminates the tedious process of manually configuring separate API keys and writing individual test scripts for each model. Your team gets immediate, side-by-side comparisons, streamlining the evaluation process and enabling faster, consensus-driven decision-making.

Comprehensive Performance Metrics

Move beyond marketing claims with metrics derived from real API calls. Compare not just token cost, but the actual cost per request, latency, and a scored assessment of output quality for your task. Most importantly, OpenMark runs multiple iterations to measure stability and variance, showing you how consistent a model's performance is. This holistic view ensures your team chooses a model that is both cost-effective and reliably high-quality.

Hosted Credits System

Simplify collaboration and budgeting with a unified credits system. Team members can run benchmarks without needing to provision or share sensitive individual API keys from different vendors. This centralized approach makes it easy to manage testing costs, track usage across projects, and ensure everyone is working from the same financial and operational framework, enhancing team synergy.

Use Cases

Mod

Rapid SaaS Development

Mod is ideal for startups and established businesses looking to rapidly develop and deploy SaaS applications. Its extensive component library and styling options enable teams to build applications quickly without sacrificing quality or design integrity.

Customizable User Interfaces

Design teams can leverage Mod to create highly customizable user interfaces that align with client branding. The flexibility of the framework allows for unique design implementations that can set applications apart in a competitive market.

Collaborative Development

With its framework-agnostic nature, Mod encourages collaboration across different development teams using various technologies. This feature fosters synergy, enabling teams to work together more efficiently and effectively, regardless of their preferred tech stack.

Enhanced User Experience

By utilizing Mod's responsive design and dark mode features, developers can enhance the overall user experience of their applications. This focus on user-centric design can lead to higher user satisfaction and retention, crucial for the success of any SaaS product.

OpenMark AI

Validating Model Choice Before Development

Development teams can collaboratively test multiple LLMs on a prototype task before committing engineering resources. This ensures the selected model fits the technical requirements and budget constraints, preventing costly rework later and aligning the entire team on a proven, data-backed foundation for the upcoming build phase.

Optimizing Cost-Efficiency for Production Features

Product and engineering leads can work together to find the most cost-effective model for a live feature without sacrificing quality. By benchmarking on real user prompts, teams can identify if a smaller, less expensive model performs just as well as a premium one for their specific use case, directly improving the feature's ROI through cooperative analysis.

Ensuring Output Consistency and Reliability

Teams building features where consistent outputs are critical—such as data extraction pipelines or automated customer support—can use OpenMark to stress-test models. By analyzing variance across multiple runs, the team can collaboratively identify and select a model that delivers stable, predictable results, building trust in the AI component's performance.

Comparing New Model Releases

When a new model version is released, teams can quickly benchmark it against their currently used model on their exact tasks. This facilitates a streamlined, evidence-based upgrade discussion, allowing the team to collaboratively assess if the new model offers meaningful improvements in quality, speed, or cost for their application.

Overview

About Mod

Mod is a powerful CSS framework designed specifically for Software as a Service (SaaS) applications, providing developers with the tools they need to create visually stunning and highly functional user interfaces. It stands out in the crowded market of CSS solutions by offering a comprehensive collection of resources, including over 88 components and 168 distinct styles. With the inclusion of two themes and more than 1,500 icons, Mod allows developers to customize their applications extensively. The framework is built with a mobile-first approach, ensuring that applications are responsive and accessible across all devices. Mod is framework-agnostic, making it compatible with a variety of popular frameworks such as Next.js, Nuxt, Vite, Svelte, Rails, and Django. This flexibility empowers solo developers and teams alike to enhance their workflow, reduce design costs, and accelerate their project timelines. With straightforward pricing and annual updates, Mod is geared towards fostering collaboration and synergy among development teams, enabling them to deliver polished and professional SaaS applications.

About OpenMark AI

OpenMark AI is a collaborative web platform designed to empower development and product teams to make data-driven decisions when integrating AI. It eliminates the guesswork from selecting the right large language model (LLM) for a specific feature or workflow. The core value proposition is enabling teams to benchmark models side-by-side on their exact tasks using plain language, without the need for complex setup or managing multiple API keys. By running the same prompts against a vast catalog of over 100 models in a single session, teams can compare critical real-world metrics like cost per request, latency, scored output quality, and—crucially—output stability across repeat runs. This focus on consistency reveals performance variance, ensuring you select a reliable model, not just one that got lucky once. OpenMark AI is built for pre-deployment validation, helping teams collaboratively find the optimal balance of cost-efficiency and quality for their unique application before any code is shipped.

Frequently Asked Questions

Mod FAQ

What types of projects is Mod best suited for?

Mod is highly versatile and is best suited for SaaS applications, web applications, and any project that requires a robust and responsive user interface. Its extensive component library and styling options make it adaptable for various use cases.

Is Mod compatible with all front-end frameworks?

Yes, Mod is framework-agnostic, meaning it can seamlessly integrate with popular front-end frameworks such as Next.js, Nuxt, Vite, Svelte, Rails, and Django. This flexibility allows developers to use Mod within their preferred tech stack.

How often does Mod receive updates?

Mod is committed to providing annual updates, ensuring that users have access to the latest features, components, and styles. This ongoing support helps teams maintain their applications' relevance and functionality over time.

Can I use Mod for mobile app development?

While Mod is primarily designed for web applications, its responsive and mobile-first design principles make it suitable for web-based mobile applications. Developers can leverage Mod to create mobile-friendly interfaces that perform well on various devices.

OpenMark AI FAQ

How does OpenMark AI calculate the quality score?

The quality score is determined by evaluating the model's outputs against the specific task you defined. While the exact scoring methodology is tailored to the task type, it generally involves automated checks for accuracy, completeness, and adherence to your instructions. This objective scoring helps teams move beyond subjective opinions to a shared, quantitative understanding of model performance.

Do I need my own API keys to use OpenMark AI?

No, you do not need to configure or manage separate API keys from providers like OpenAI or Anthropic. OpenMark operates on a hosted credits system. You purchase credits through the platform and use them to run benchmarks, which are executed via OpenMark's own integrations. This simplifies setup and secures your team's workflow.

What is the benefit of testing for stability/variance?

Testing stability by running the same prompt multiple times shows you whether a model's good output was a lucky one-off or a reliable result. High variance means the model is inconsistent, which is a major risk for production features. This insight allows your team to choose a predictably good performer, ensuring a better user experience and reducing operational headaches.

Can I use OpenMark for tasks beyond simple text generation?

Absolutely. OpenMark is designed for a wide variety of task-level benchmarking, including complex workflows like classification, translation, data extraction, question answering, RAG (Retrieval-Augmented Generation) systems, and even image analysis with multimodal models. Describe your collaborative project's needs, and you can benchmark models suited for that specific challenge.